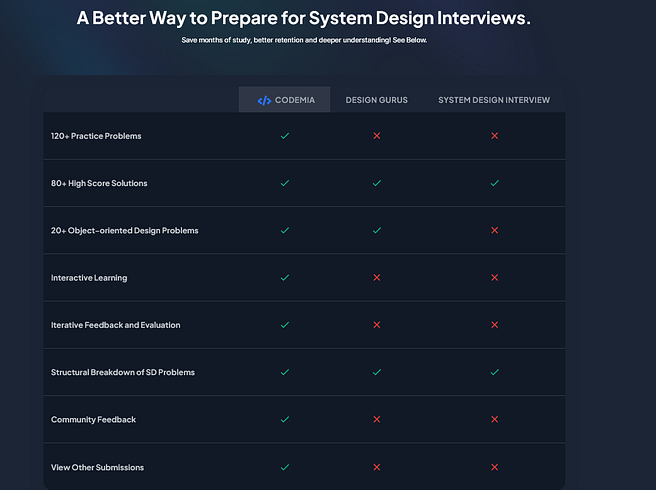

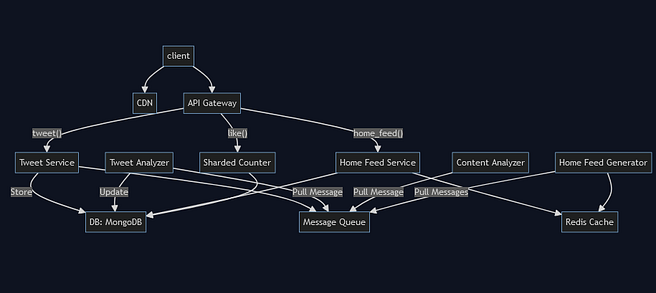

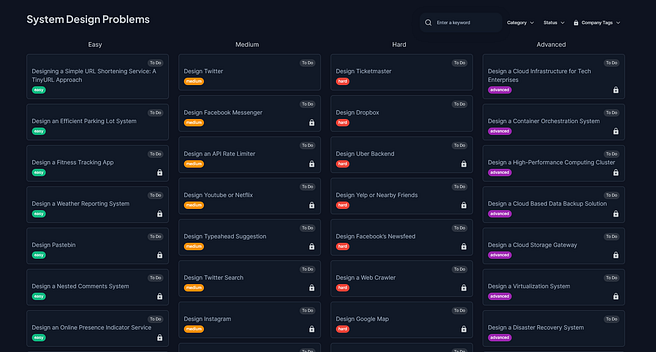

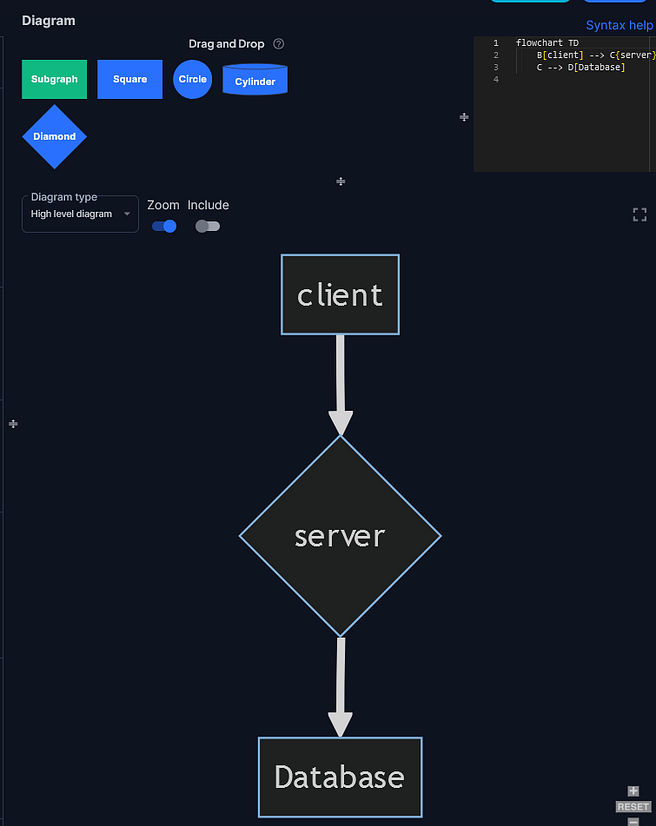

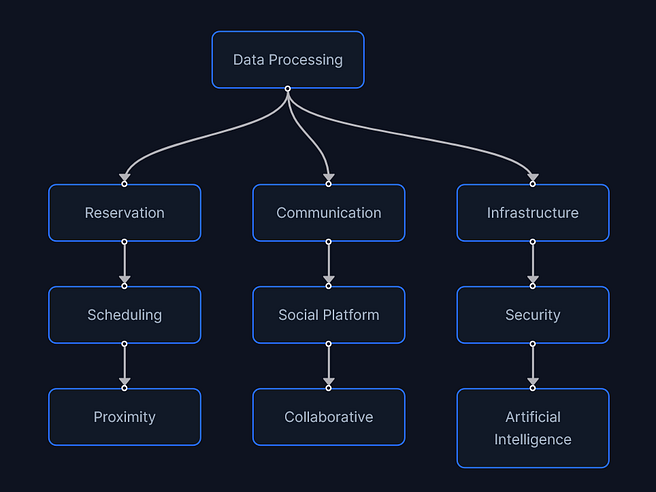

Hello guys, If you’re preparing for system design interviews—especially for FAANG or top-tier tech roles, you’ve likely encountered Bugfree.ai. In the past, I have tried various online platforms to learn System Design like ByteByteGo but one thing I missed is online interactive practice, something like LeetCode where I can solve problems, get it evaluated and also get points to move up the leader board. During my search, I have recently come across this amazing platform and its the platform brands itself as “LeetCode for System Design,” offering a mix of mock interviews, structured question practice, real-time feedback, and more.

But does it truly deliver value? Let’s break it down.